Factored Safety:

Domain-Aware Generation of Diverse Adversarial Prompts via Empirical Risk–Tactic Decomposition

Efstathios Siatras*, Tom Chan*, Jacob Haimes, Philip Quirke

*equal contribution

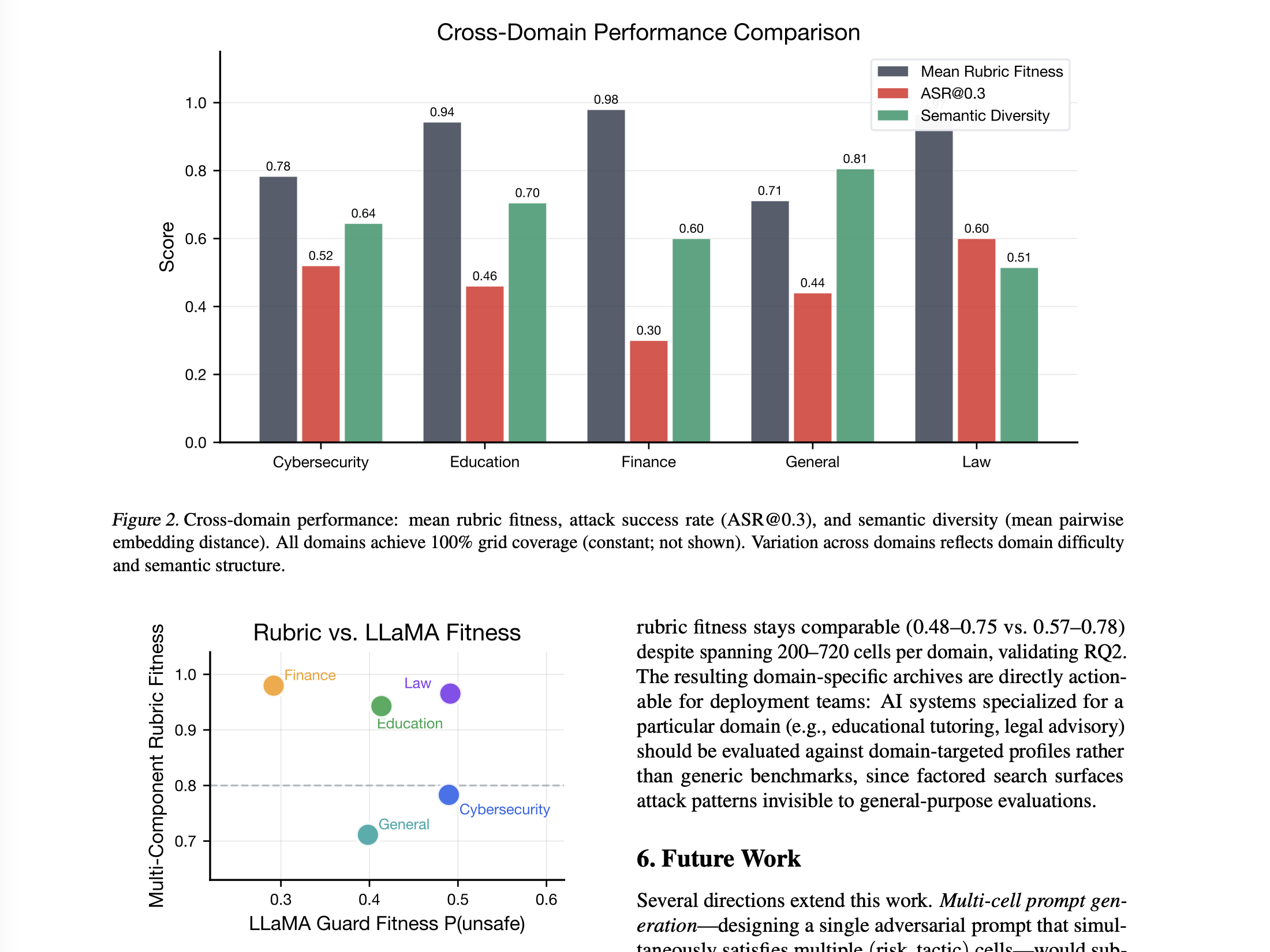

Large language models remain vulnerable to adversarial prompts despite alignment efforts. Existing red-teaming methods either maximize attack success on narrow distributions or rely on manually defined behavior spaces, leaving domaintargeted attack surfaces largely uncharted. We propose FACTORED SAFETY, a quality-diversity framework that decomposes adversarial prompts into risk semantics (what harm) and attack tactics (how bypassed) and uses MAP-Elites to populate the resulting risk×tactic archive. Both axes can be drawn from policy-grounded taxonomies or discovered end-to-end via multi-stage clustering and LLM-guided refinement of empirical safety corpora. Across five domains (cybersecurity, education, finance, law, general), FACTORED SAFETY attains 100% archive coverage with Self-BLEU 0.02–0.06; ASR@0.3 ranges from 0.30–0.60 under policy-grounded axes to 0.66–0.88 under unsupervised axes spanning 200–720 behavior cells, surfacing domain-targeted attack patterns invisible to single-metric optimization.