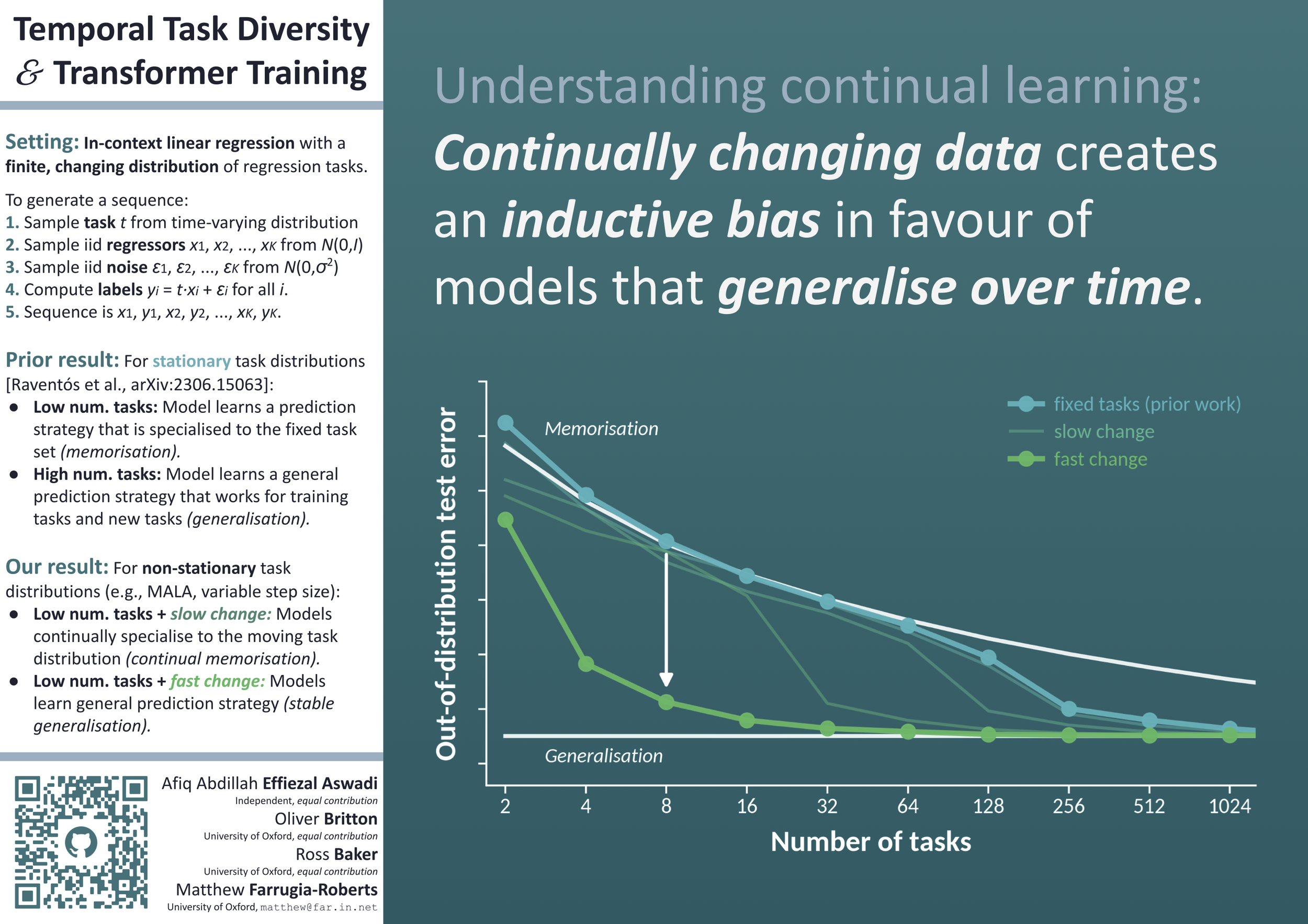

Temporal Task Diversity:

Inductive Biases Under Non-Stationarity in Synthetic Sequence Modelling

Afiq Abdillah Effiezal Aswadi*, Oliver Britton*, Ross Baker*, Matthew Farrugia-Roberts

*Equal contribution (random order). Cite as: Effiezal Aswadi et al., 2026.

“Understanding continual learning: Continually changing data creates an inductive bias in favour of models that generalise over time.”

Modern deep learning science often assumes that neural networks learn from a fixed data distribution. However, many practically important learning problems involve data distributions that change throughout training. How does such non-stationarity impact the inductive biases of deep learning towards models with different structural, generalisation, and safety properties? A fruitful testbed for studying inductive bias is in-context linear regression sequence modelling, where small transformers display strikingly different generalisation patterns depending on the diversity of the (fixed) training task distribution. In this paper, we explore the effect of diversifying the task distribution across training time, finding that such temporal diversity leads to an increased bias towards generalisation over memorisation.