Further Obstructions to Solving the ELK Problem

Paul Rapoport, Francis Rhys Ward, Korbinian Friedl

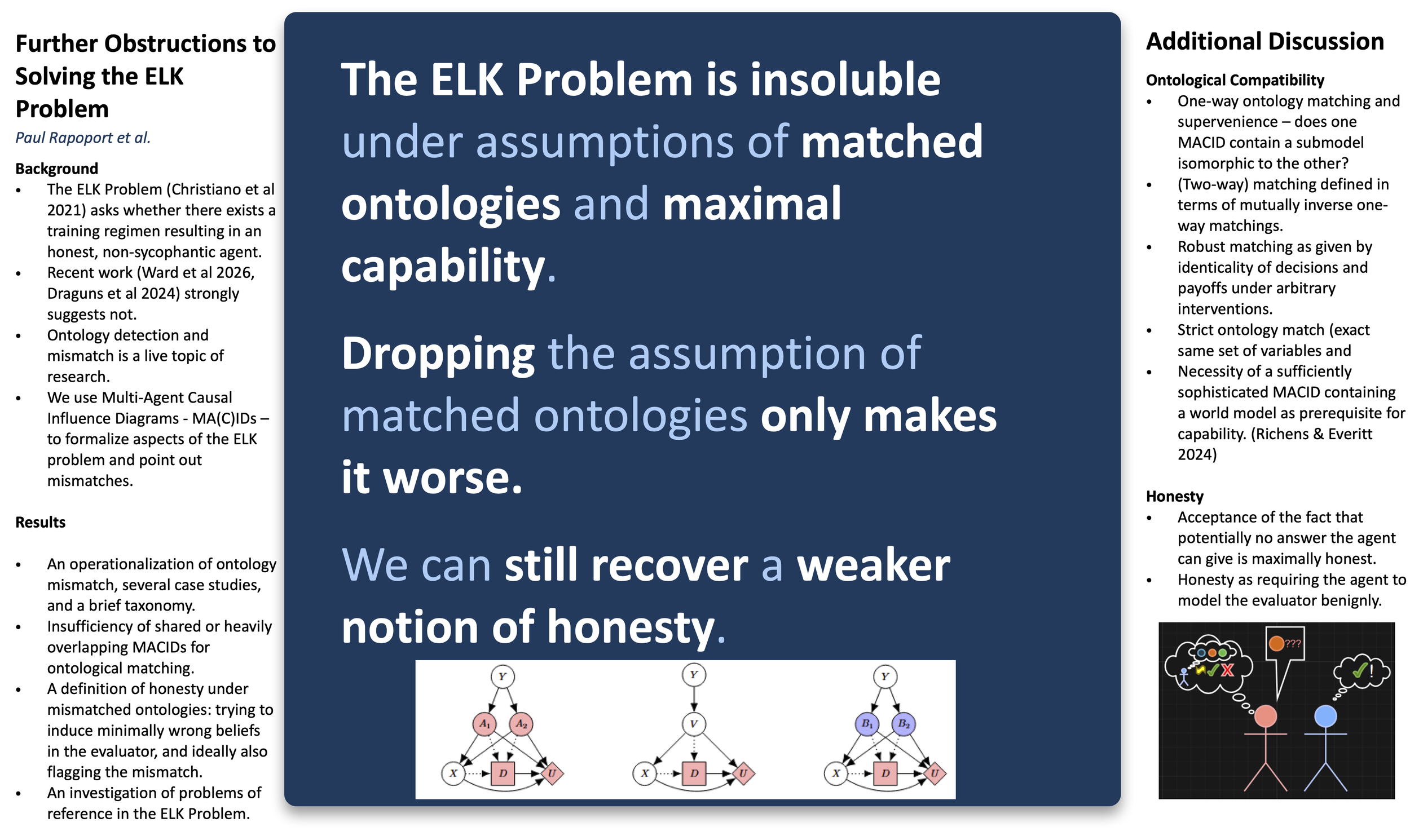

“The ELK Problem is insoluble under assumptions of matched ontologies and maximal capability. Dropping the assumption of matched ontologies only makes it worse. We can still recover a weaker notion of honesty.”

Advanced AI models have extensive knowledge of their environments; in fact, their knowledge may far exceed that of their developers or users. Consequently, a desirable property for an AI system is that it is honest—that it accurately reports its beliefs about the world. Designing an AI system to be honest may be difficult, especially if we want to ask it questions about latent variables in the environment—variables which are hidden from the human interacting with it. This gives rise to the problem of eliciting latent knowledge (ELK): the problem of training an AI agent to honestly report its beliefs. But the capacity for honesty as assumed by the statement of the ELK problem itself relies on the ability of the AI whose beliefs are being elicited to express itself comprehensibly and unambiguously, both sharing an ontology – a system for classifying aspects of its environment and the ways that those features of its environment interact – with its human evaluators, and also common reference – that when the AI or its human evaluators point at some feature of the environment, the other one can perceive the same feature and understand its significance. In this paper, we address ontology mismatch and problems of reference, making both formally precise using the language of causal influence diagrams (CIDs), which can be used to describe the relationship between an agent’s training environment and its subjective representation of the world. We also use CIDs and to a lesser extent incomplete information multi-agent influence diagrams (IIMAIDS) to formalize the concept of honesty in the same setting.