Adversarial Genomic Sequences Could Evade Biosecurity Screening

Jeyashree Krishnan, Ajay Mandyam Rangarajan, Andrea Loehr, Jason Hoelscher-Obermaier

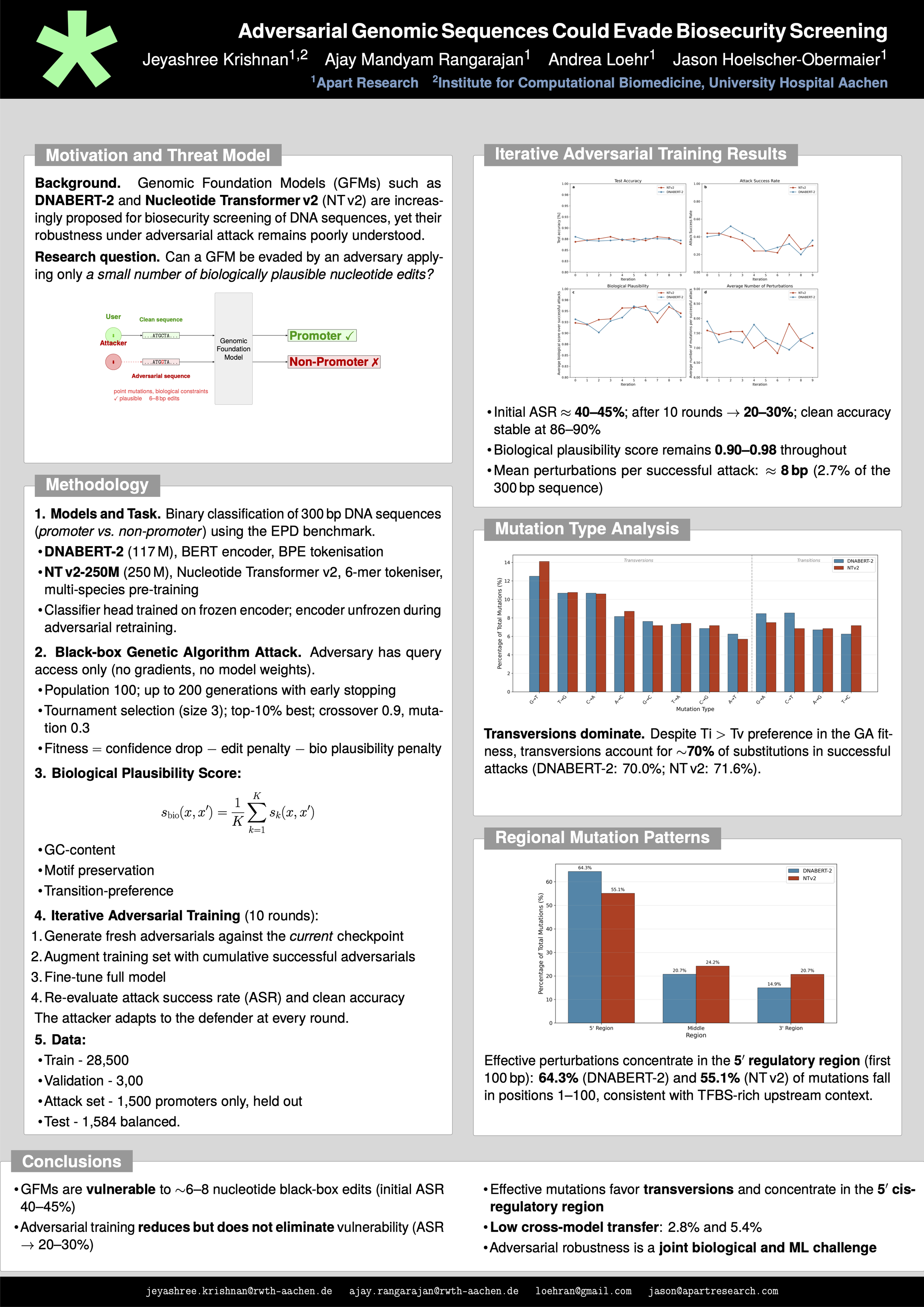

Recent biosecurity risk assessments identify biological foundation models as having high misuse potential over the coming decade that lower barriers to develop harmful biological agents, with many tools open source and readily accessible to potential threat actors (Nelson & Rose, 2023; Webster et al., 2025). At the same time, Genomic Foundation Models (GFMs) such as DNABERT2 (Zhou et al., 2024) and Nucleotide Transformer v2 (Dalla-Torre et al., 2024) could help improve biosecurity defenses; yet their robustness under adversarial attacks remains poorly understood.

To address this gap, we evaluate the susceptibility of GFMs to adversarial mutations. Since genomic inputs are discrete and subject to biological constraints, standard adversarial perturbation models for continuous domains do not directly apply (Kuleshov et al., 2021). We study promoter classification as a representative task and analyze GFM robustness under black-box threat models with nucleotide-level perturbations. Adversarial sequences are generated via genetic algorithms subject to explicit biological constraints, including GC-content bounds, mutation budget limits, and transitions (Ti)/transversions (Tv) bias.

Our analysis reveals that GFMs are vulnerable to perturbation-efficient adversarial attacks requiring only a small number of nucleotide edits. These vulnerabilities are not random: effective adversarial substitutions favor transversions over transitions and concentrate in the 5’ regulatory region of promoter sequences. Iterative adversarial training reduces attack success rates from initial values of approximately 40–45%, but substantial attack success of about 20–30% persists after ten rounds of hardening. Our results establish adversarial evaluation of GFMs as a joint biological and machine learning challenge requiring domainspecific threat models and defenses.