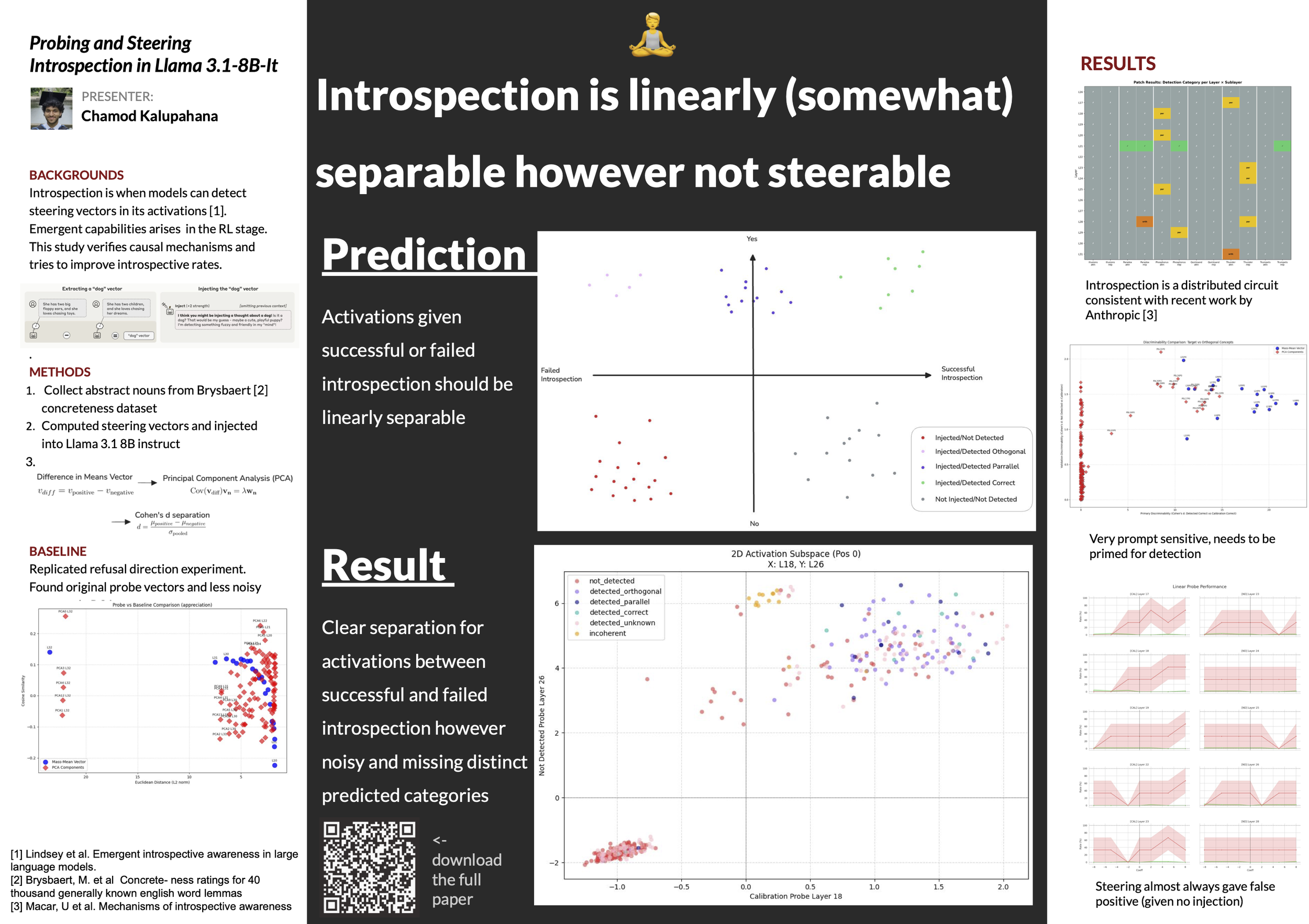

Probing and Steering Introspection in Llama-3.1-8B-Instruct

Chamod Kalupahana

“Introspection is linearly (somewhat) separable however not steerable.”

Large language models can introspect on their internal activations to determine whether a concept vector has been injected, and can often report both the injected concept and its strength. Building on (Hahami et al., 2025), We tested the hypothesis from (Macar et al., 2026) that introspection has a majority variance in one feature direction. In this framework, we have defined: a novel way to classify introspection, a multi-step judge framework for robustly classifying introspection given model outputs, and a statistical method for capturing less noisy feature directions from residual stream activations. We validated the results from (Macar et al., 2026) and we found that introspection is noisily separable in one feature direction but not steerable by scaling activations along this direction). Our code is available on Github.