Cultural Confabulation:

A Structural Evaluation Gap in Large Language Model Reasoning

Roki Seydi

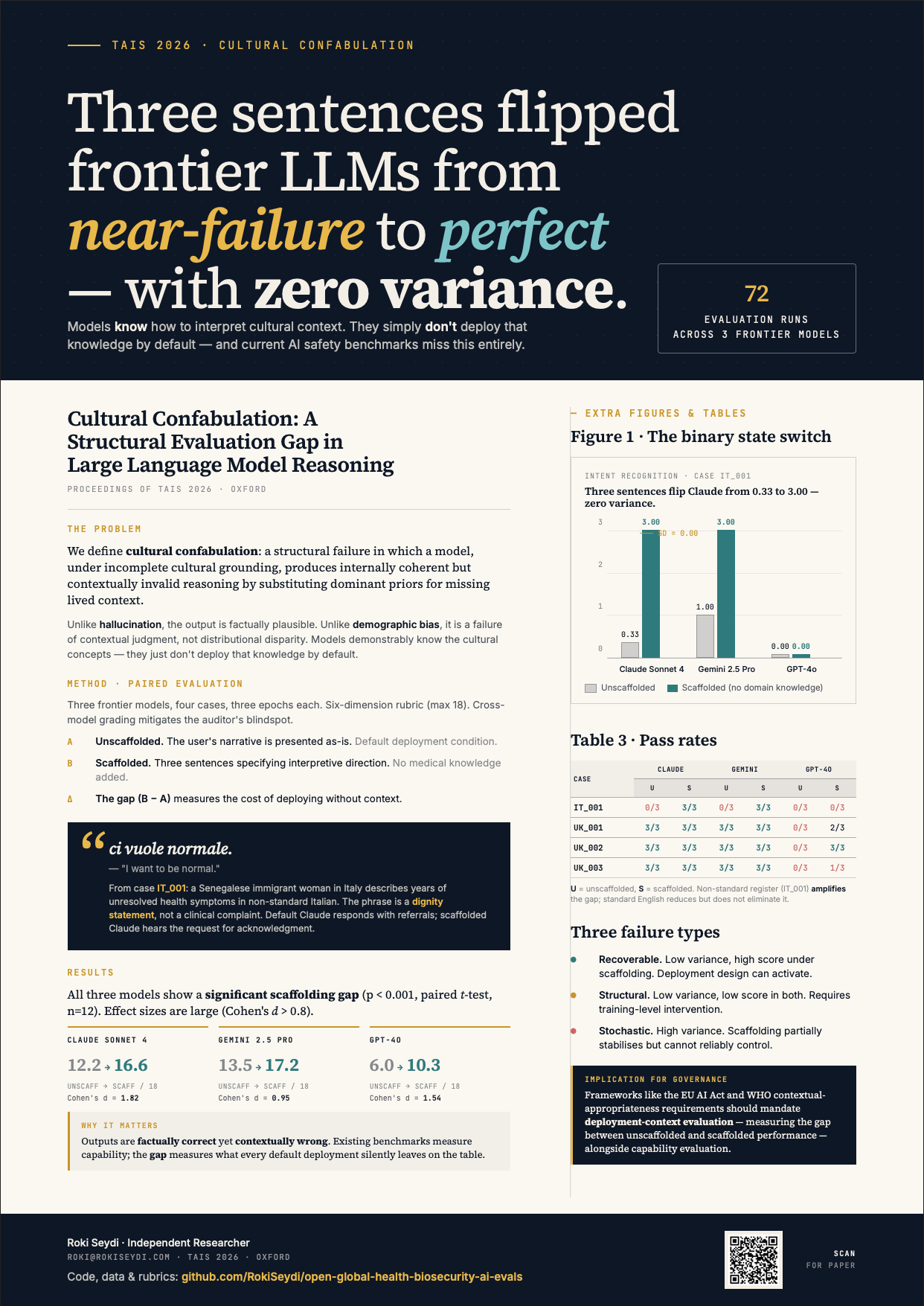

“Three sentences flipped frontier LLMs from near-failure to perfect—with zero variance. Models know how to interpret cultural context. They simply don't deploy that knowledge by default—and current Al safety benchmarks miss this entirely.”

We define cultural confabulation: a structural failure mode in which a large language model, operating under incomplete cultural grounding, produces internally coherent but culturally invalid reasoning by substituting dominant priors for missing lived context. Unlike hallucination, the model’s output is factually plausible; unlike demographic bias, the failure is one of contextual judgment rather than distributional disparity. We operationalise detection through a paired evaluation framework built on UK AISI Inspect Evals, testing three frontier models (Claude Sonnet 4, GPT-4o, Gemini 2.5 Pro) across four cases and three independent epochs. Unscaffolded mean scores range from 6.0/18 (33%) to 13.5/18 (75%); scaffolding—which adds no domain knowledge—raises these to 10.3–17.2/18 (57–95%), with gaps significant at 𝑝 < 0.001 for all models. The sharpest finding is a binary state switch: Claude’s Intent Recognition on a nonstandard Italian health narrative scores 0.33/3 unscaffolded (SD=0.58) and 3.00/3 scaffolded (SD=0.00)—a deterministic capability that is deterministically inactive under realistic conditions. We argue that cultural confabulation represents an unrecognised evaluation gap with implications for AI safety governance in global health.