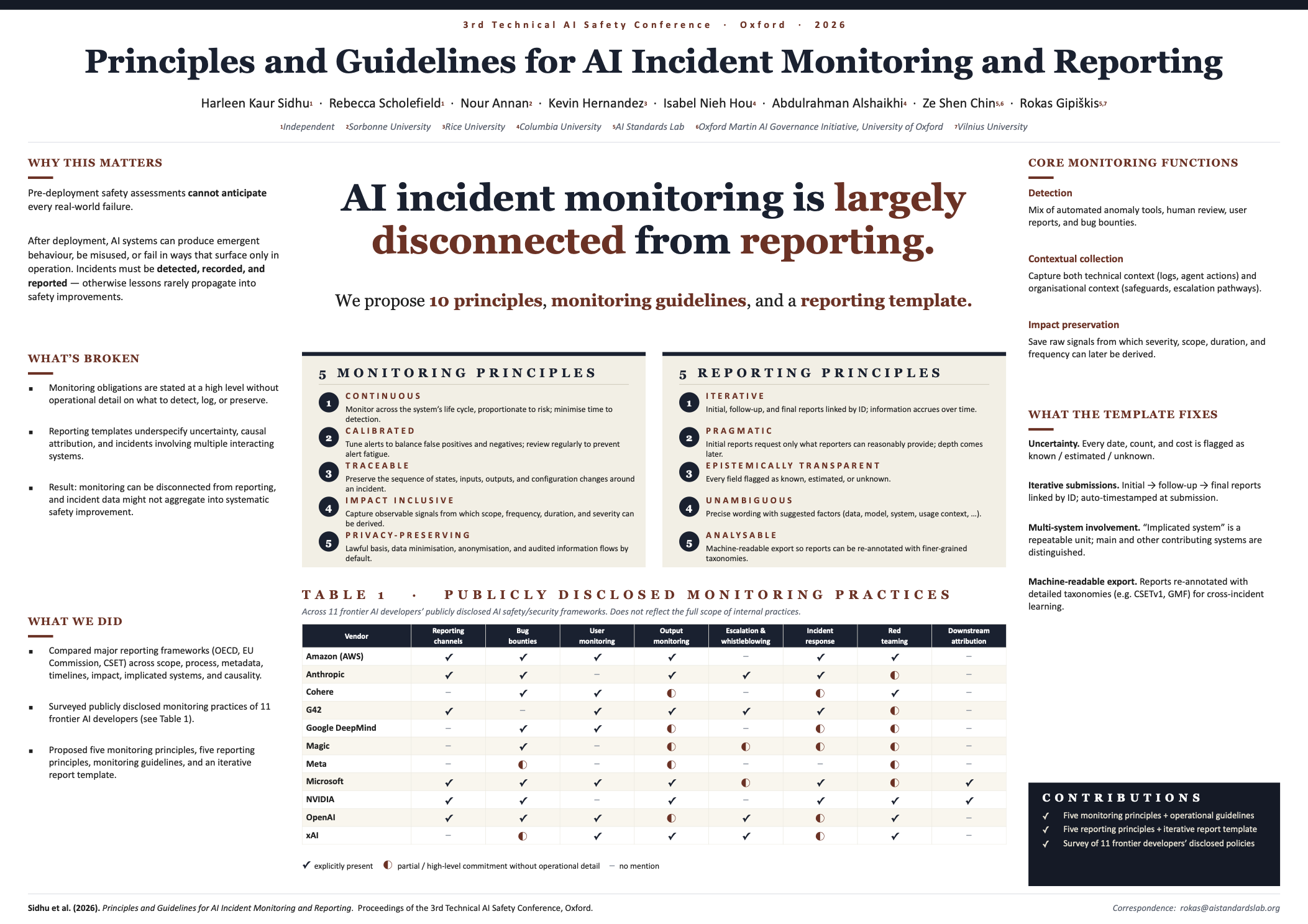

Principles and Guidelines for AI Incident Monitoring and Reporting

Harleen Kaur Sidhu, Rebecca Scholefield, Nour Annan, Kevin Hernandez, Isabel Nieh Hou, Abdulrahman Alshaikhi, Ze Shen Chin, Rokas Gipiškis

“AI incident monitoring is largely disconnected from reporting. We propose 10 principles, monitoring guidelines, and a reporting template.”

AI systems may produce failures after deployment that pre-deployment safety assessments do not anticipate. While various frameworks from regulatory bodies and independent efforts address aspects related to AI incident monitoring or reporting, their monitoring obligations are often specified at a high level without operational detail, and their reporting templates leave dimensions such as uncertainty, causal attribution, and multi-system involvement underspecified. As a result, existing monitoring practices do not sufficiently address what would be required of a robust reporting framework. This paper identifies the central gaps in current monitoring and reporting practices and proposes (i) five principles for post-deployment incident monitoring, supported by a set of operational monitoring guidelines; and (ii) five principles for incident reporting, operationalised through an incident reporting template that accommodates uncertainty and supports iterative submissions. Supporting material on various aspects of the incident lifecycle, including definitions, taxonomies, learning, and existing corporate monitoring practices is provided in the appendices.