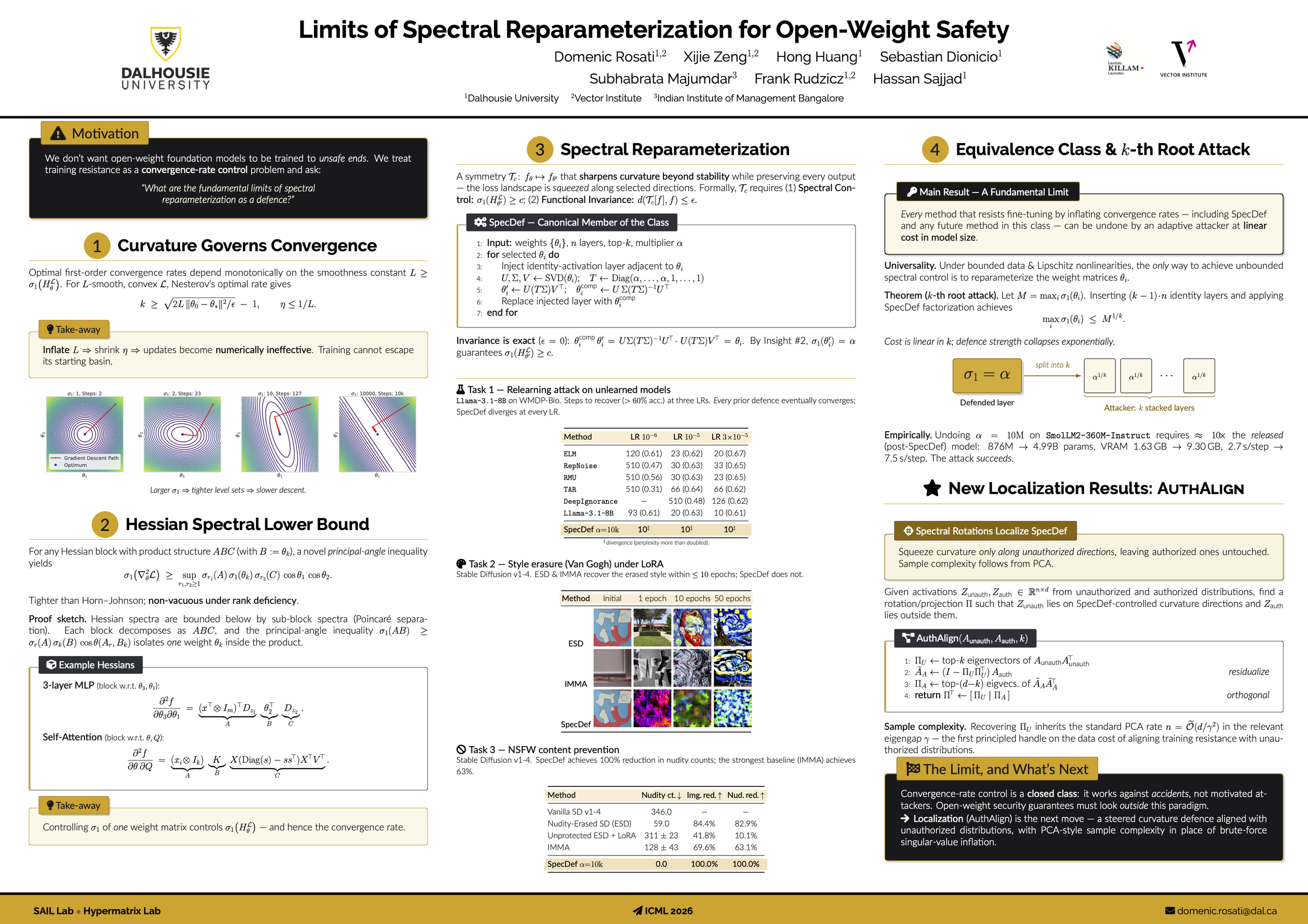

Limits of Convergence-Rate Control for Open-Weight Safety

Domenic Rosati, Xijie Zeng, Hong Huang, Sebastian Dionicio, Subhabrata Majumdar, Frank Rudzicz, Hassan Sajjad

“We don't want open-weight foundation models to be trained to unsafe ends. We treat training resistance as a convergence-rate control problem and ask: ‘What are the fundamental limits of spectral reparameterization as a defence?’”

Open-weight foundation models can be fine-tuned for harmful purposes after release, yet no existing training-resistance method provides theoretical guarantees. Treating these interventions as convergence-rate control problems allows us to connect optimization speed to the spectral structure of model weights, which we use to develop a novel understanding of training-resistance through spectral reparameterization. Our main result is a fundamental limit on this entire class of methods: an attacker with sufficient knowledge can restore fast convergence at a linear increase in model size. To make this limit non-vacuous, we use as a running example SpecDef, an optimal and empirically strong representative of the class that provably and empirically slows firstand second-order optimization in non-adversarial settings. The implication is that overcoming the open-weight training-resistance problem will require methods that are not equivalent to controlling convergence rate.