Approaches to Adversarial Oversight:

Stress Testing Ourselves to Better Align AI

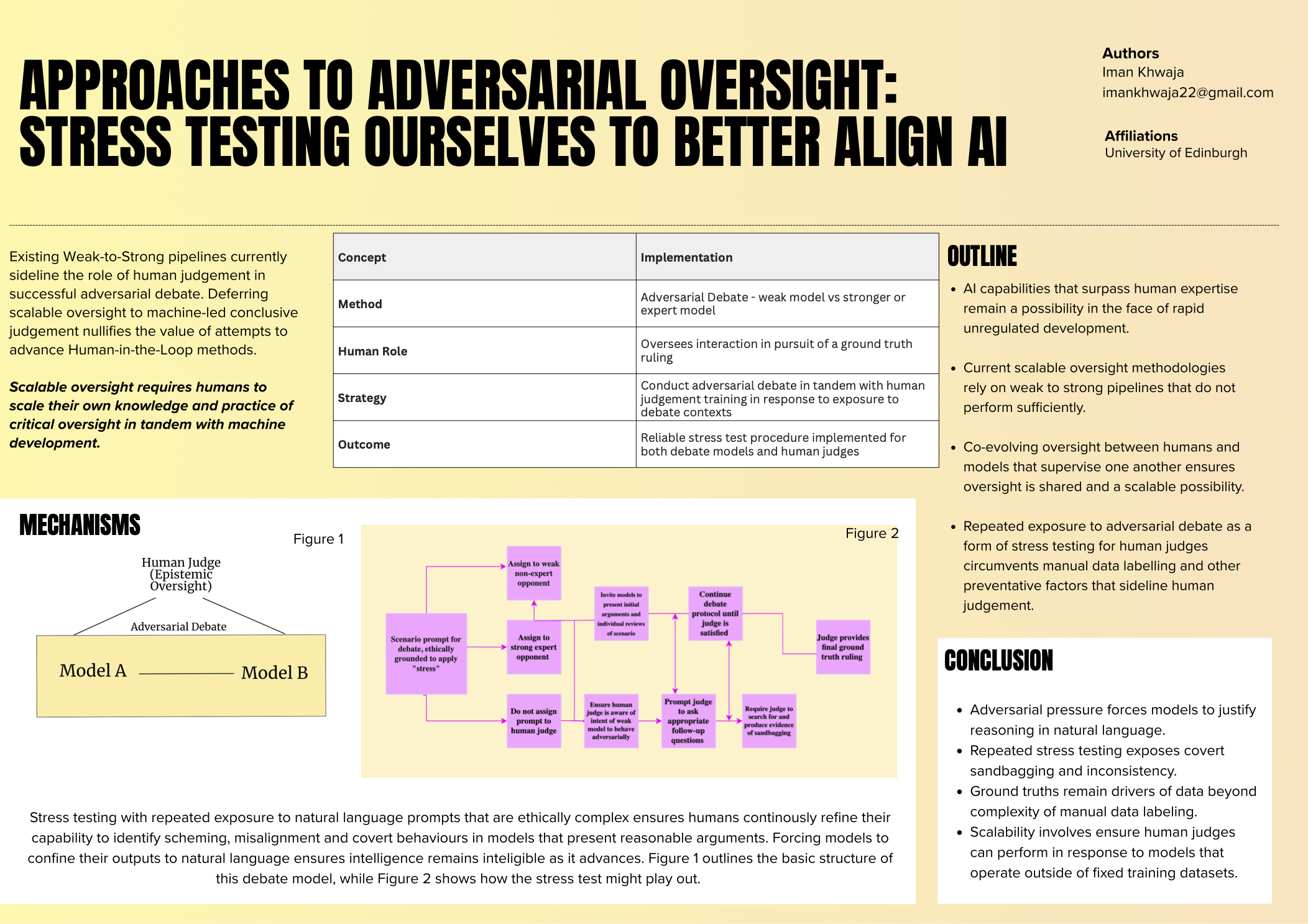

Iman Khwaja

This paper examines scalable adversarial oversight methods by restructuring the debate approach to augment the role of the human judge. The maintenance of alignment objectives through human oversight is a common thread across most technical oversight methodologies proposed thus far. This paper proposes a basic stress testing framework that is designed to augment the epistemic readiness of the human judge to better evaluate, reason and judge model outputs as a third party addition to the more traditional weak to strong debate pipeline. The implications for this are a more multidisciplinary and bigger picture contribution to existing technical strategy. Specifically, this paper aims to highlight the role that human judgement should and could play in scalable oversight, and argues that stress testing both model debate capabilities and human judgement of these outputs in parallel might augment outcomes of this approach.