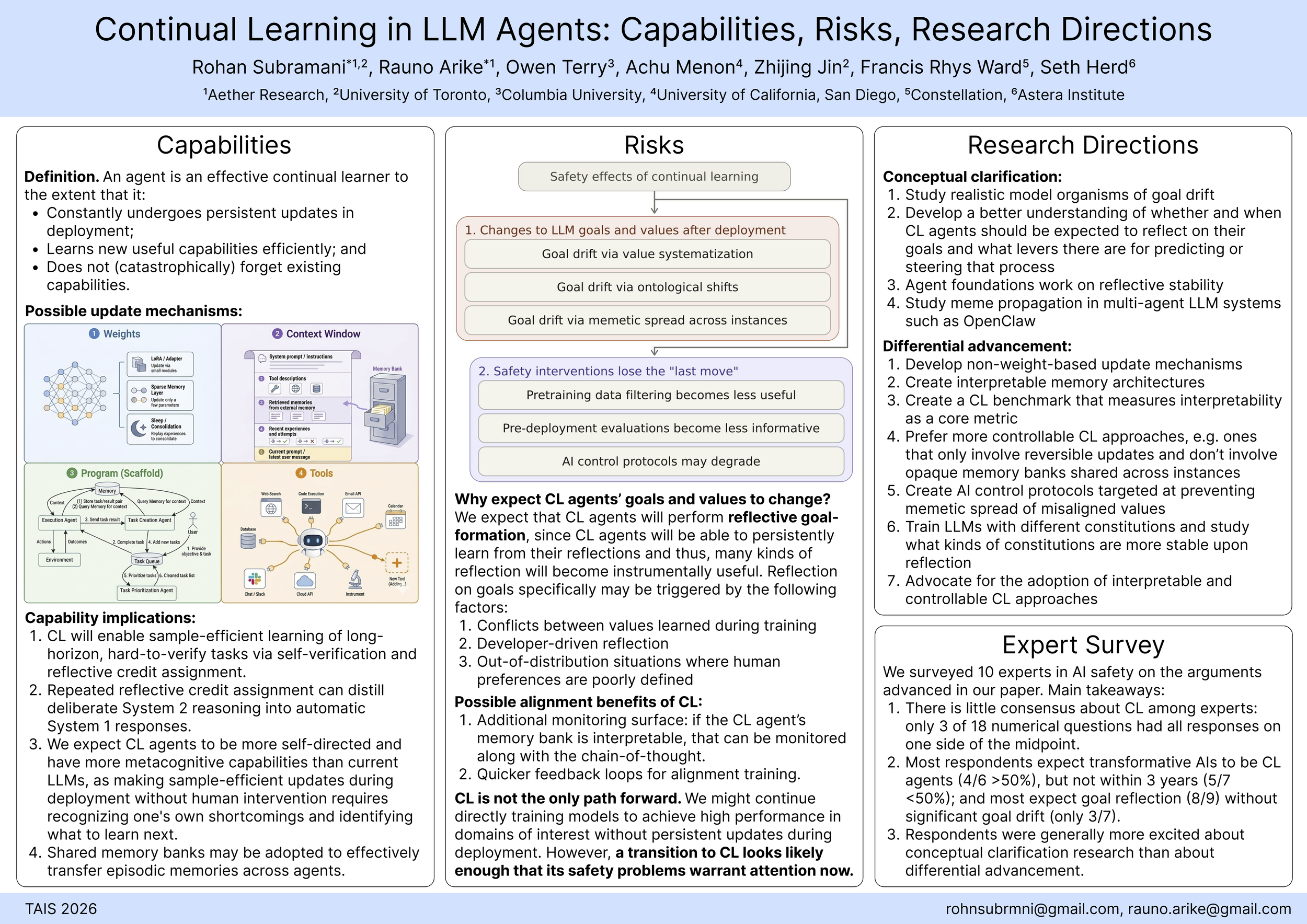

Continual Learning in LLM Agents:

Capabilities, Risks, and Research Directions

Rohan Subramani*, Rauno Arike*, Owen Terry, Achu Menon, Zhijing Jin, Francis Rhys Ward, Seth Herd

*equal contribution

Continual learning (CL) is a leading candidate for the most important shortcoming of modern LLM agents. Effective CL could dramatically increase the economic and societal impact of AI systems, but could also amplify the risks they pose. In this paper, we conduct a detailed exploration of how CL may develop and its potential implications for AI capabilities and safety. Our analysis touches on the update mechanisms that could enable efficient learning during deployment, the range of applications that could benefit from CL, and ways that CL could increase risks from AI misalignment and misuse. We remain uncertain how tractable it is to reduce AI risk by targeting CL, but suggest that the AI safety community should potentially direct additional effort toward conceptual clarification about CL and differentially advancing safe CL implementations.